The Cockpit

I got my pilot's license before most people got their first apartment. Private, commercial, instrument-rated. The whole progression, done young and done fast. There's something about flying that rewires how you think. You learn to read instruments under pressure, navigate complex systems in real time, and make decisions when the margin for error is literally zero.

The cockpit taught me that confidence isn't about knowing everything. It's about knowing what matters right now and acting on it. That lesson followed me into everything I've done since.

Before AI, There Was the Cockpit

~200 hours in the cockpit taught me how to think under pressure: cross-country flights at 12,000 feet, stall training, and night flying where your instruments matter more than intuition.

That's where System 1 vs. System 2 became practical, not theoretical. Recognize fast when instinct is useful, then deliberately switch to checklist-driven reasoning when the stakes are high.

Different domain, same operating system I use in AI today: trust instrumentation, run procedure, and design for edge cases before they become failures.

Purdue / Krannert

Purdue's Krannert School of Management taught me to break business problems into testable pieces and make decisions with data, not vibes. Case competitions were training: solve fast, defend your answer. Won a Boeing case. That moment made it clear: I didn't want to analyze systems. I wanted to build them.

Crowe, then EY

I started by auditing financial statements at Crowe. The work was rigorous and high-accountability. Also repetitive enough that I kept asking how much of it could be systematized.

That question pushed me toward automation and into EY as a data engineer, where I built data pipelines that reduced manual work. This was before "data science" was a thing, but the pattern was already there: instrument the process, automate where you can measure quality.

Uber: ML at Global Scale

I joined Uber at one of the most intense moments in the company's history. The London license was threatened. Regulatory pressure was coming from every direction. The company was fighting for its survival on multiple fronts simultaneously, and the machine learning systems had to work. Not in theory, not in a notebook, but in production at global scale.

That's where I learned what it really means to ship models that matter. Not models that look good in a presentation. Models that have to make real decisions for millions of people, every day.

Master's in Data Science @ UC Berkeley

I did a Master's in Data Science at UC Berkeley while working full-time. I was focused on deep learning and scalable data systems. One project was text detoxification: build a model that rewrites toxic content without losing the original meaning. Hard problem. Stuck with me.

Applied Data Science Capstone

In Berkeley's AI-focused Master's in Data Science program, I strengthened the full stack: machine learning foundations, experimentation design, and production-grade model evaluation.

For the capstone, I built and presented an end-to-end applied AI project: problem framing, model iteration, and outputs a real team could use.

What I learned here still drives my approach: connect research to product outcomes, make quality measurable, and design systems teams can actually ship and trust.

Data Scientist @ Messenger

At Messenger, I shipped ML products to billions. The pattern: high-quality zero-to-one launches built on technical depth and product judgment, working closely with engineers and PMs.

By 2024, I was leading MetaAI's largest Messenger update: from Tab to Search integration and beyond.

2022: Launched calling on the Facebook App, growing to 11M+ monthly active calling users.

2023: Launched Messenger in Virtual Reality for Meta Quest.

2023: Launched AI Stickers and /imagine, bringing generative creativity into everyday conversations.

2023: Launched MetaAI and AI Characters, the first LLM-powered experiences in Messenger.

2024: Launched MetaAI's largest feature bundle update in Messenger, including MetaAI Tab and MetaAI in Search.

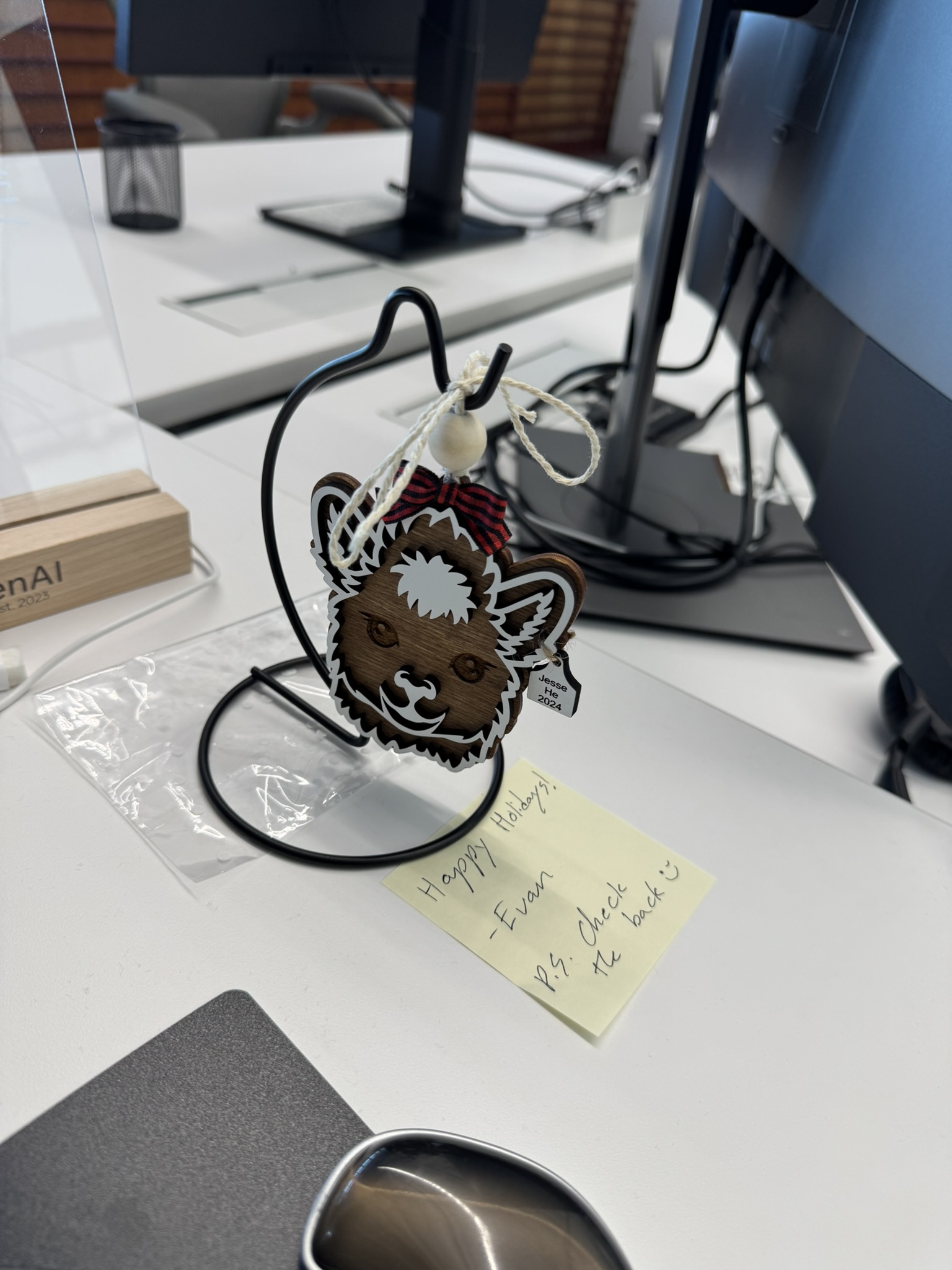

Research Data Scientist @ Meta Superintelligence Lab

At Meta Superintelligence Lab, I moved to the bleeding edge: foundation-model quality, data curation, and evaluation systems. Same cockpit discipline, just at model scale.

2024: Supported the launch of Llama 3 through RLHF and SFT data evaluation; defined and measured annotation quality, analyzed correlations with reward-model scores, and contributed to the official publication. Read the paper.

2024: Established the QA and data quality framework for Llama 4 post-training across Coding, Reasoning, Multilingual, Multimodal, and Voice domains. Shipped a scalable validation pipeline to annotation vendors.

2024: Enhanced Llama reward-model performance for Coding by redefining annotation standards and conducting deep analyses of vendor data pipelines.

2025: Led Llama 4 post-training data decontamination, improving spend efficiency and model reliability by identifying and removing test-set-similar data using SONAR embeddings.

2025: Built the data foundation for the next iteration of MovieGen, Meta's video generation model. Used ViCLIP and the Perception Encoder to define and measure video data quality. Worked across research and product teams to build balanced, high-quality pre-training datasets. Read the paper.

Now: Post-training on Meta's next-generation AI systems. Defining data quality standards and curating the datamix for reinforcement learning. The work that determines what the model learns to value.

At the Frontier

The path from the cockpit leads here. Frontier AI research at Meta Superintelligence Lab. Every week, Softmax: strategy for the people who build real things.